I’m throwing caution into the wind – Michael Jackson did Sonic 3 and that’s that. In case you didn’t know, much ink has been spilled over whether or not MJ actually wrote the soundtrack to the greatest game on one of our favourite consoles. It’s certainly no secret he enjoyed playing them. Debating about it any longer is futile – how could you argue with this man?

Now that it’s settled it’s worth considering how Michael managed to work within the confines of a 16-bit gaming system. The limitations of the system required the music to be composed in chip-tune. For a comprehenisve understanding of how the form of chip-tune music is generated you can watch the video below. If you just want to bludge I’ll explain what you need to know when we get to it.

In order to explain how these basic constraints impacted Michael, we need to look at the differences between an 8-bit and 16-bit system. The one thing you should know about chiptune is its definitive feature – all music must be created by hardware, rather than software running on the device. For the sake of looking at Sonic 3, it’s easiest for us to compare the Sega Mega Drive (16-bit) with the Sega Master System (8-bit). While the Mega Drive has a 16-bit processor, its audio chip is only 8-bit, which makes it a perfect candidate for this analysis.

There are two types of chips required to create sound in a classic game console, the PSG (Programmable Sound Generator) chips and FM (Frequency Modulator) chips. PSG chips work by sythesising multiple wave forms and mixing them with the output of a noise generator to create a single waveform that has its amplitude manipulated. The final result is a decent mimicry of a sound that can be found in the real world. An FM chip is essentially a synthesiser. In fact the concept behind the chip within both systems originates from Yamaha’s famous line of synthesisers.

Both systems use the Texas Instruments SN76489 PSG chip. For our purposes all we need to know is that it contains 3 square wave tone generators and one white noise generator and is able to operate at various frequencies and sixteen different volume levels.

The Texas Instruments SN76489 PSG chip (ironically made in England, apparently)

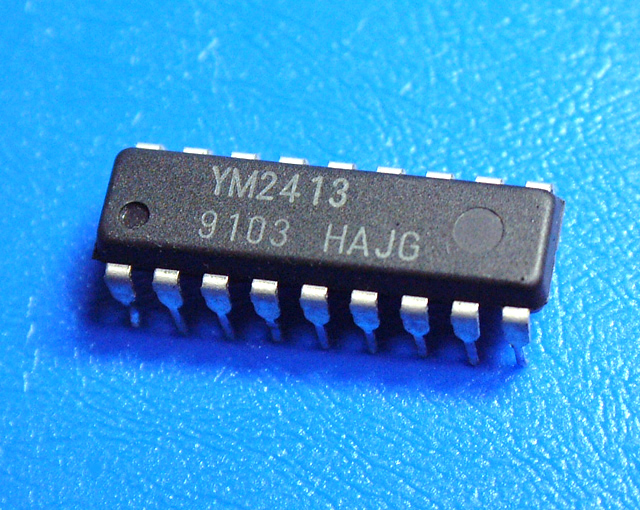

Between the two consoles there is a significant increase in what the FM chips are capable of. The Yamaha YM2413 that can be found in the Sega Master System is not only impacted by the fact that it’s older, it has also had a lot of functionality removed in order to be cost effective at the mass production level. Such a paired down model has resulted in 15 hard-coded instrument settings with one left over to be user-defined. Only 2 waveforms are included and there’s no adder to mix the channels, instead the chip’s digital to analogue converter simply plays one channel after the other.

The Yamaha YM2413

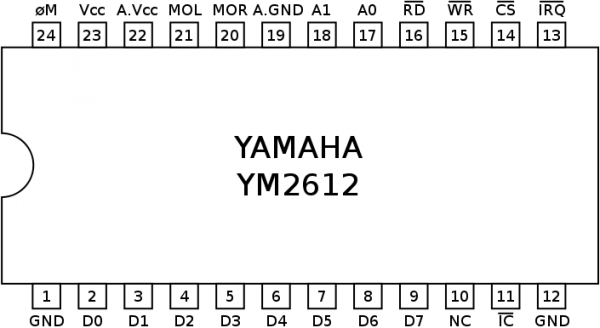

Comparatively, the Yamaha YM2612 has an analogue stereo output (acting as the digital-to-analogue converter) – the obvious difference being that it can certainly handle more than one channel playing at once. In fact, it can handle 6, with up to 4 operations occurring on each channel. The third channel can have frequencies set independently, allowing for dissonant harmonics.

A diagram of the Yamaha YM2612 chip

Having covered these differences we will be able to witness the real world function what Linus Akesson is talking about in his lecture. The key element to consider here is that constraints enforce the creative process as either workarounds, acceptance or by choosing to ignoring issues when technical limitations impose themselves on compositions. He breaks his lecture down to 4 parts, which I will apply to Sonic 3 in order.

1.Waveforms

The 1-bit squarewave is handling the bass in this song. You can hear the flat buzz that defines this kind of wave during a solo at 1:00 mark. This flatness is a product of the way 1-bit music is generated. The chip has then set an external parameter on the function before passing it through the DAC. Also note the flange effect on the backing tracks. This effect is created through by layering the same sound over itself at least once. During the Sega Master System era one passover with the flange would be all that they could afford. The scaling up in the YM 2612 means that they can apply the flange to multiple instruments.

Michael Jackson had a way of using epic captured sounds to make his music feel full of life. The glass shatter sounds is a great example of this. Smooth Criminal is probably the MJ song that features this effect most prominently but he has used it in multiple songs. How do you get a computer chip to make this noise? Let’s take a look at how it was used in the Carnival Nights level in Sonic 3. It’s in the first few seconds so you don’t have to listen to the whole thing:

By shifting from pulsewave to noise and back quickly a single noise can be made by several different unique sounds. Linus identifies several ranges of sounds based on their frequencies. This sound is created at the ‘effect’ range of frequencies, where sounds between 10-1000Hz are fast enough to be heard as a single unit of sound. If it had been played at the ‘rythmic’ range of frequencies, the glass shatter would dissolve into the multiple types of sounds that it is composed of. Any lower than 10Hz is where this breakdown would occur.

2. Pitch

Linus discusses the Atari in this section and in-as-much has very little for us to look at here.

3. Tempo

Gaming consoles have a massive constraint on the tempo of their music. Musical data is processed at the same time as the video data and in order to send out the signal to the television both types of data are required to take turns and being sent to their respective receivers. This process is known as Vertical Blanking. This binds musical data to the restrictions of the visual data. International standards regarding how images are processed change depending on the region, which has implications for their refresh rates.

In both Europe and Australia we use PAL which has a refresh rate of 50Hz, when interlaced with sound the composer is restricted to bpms of 107, 125 and 150. The USA uses NTSC which refreshes at 60Hz, changing the possible bpms to 113, 129 and 150. Those bpm ranges aren’t exclusive by the way, just the most common. The impact for Michael here is that he is required to write in either 150bpm or another shared value if the music is to be standardised globally. Surprisingly, this is slightly faster than some of his pop hits that feel particularly energised like Beat It, which is 138 bpm. The effect is that songs in Sonic 3 capture both Michael’s unique sound while characterising Sonic’s famous speed. If you watched the first video you’ll notice there are a few MJ hits that came after Sonic 3 that are simply pitch shifted versions of level theme songs to accomodate a more comfortable pace for the artist.

4. Polyphony

Just because chiptune music uses a limited number of channels doesn’t mean you can only programme one sound per channel. Much like having two hands while playing a piano can create the effect of having two melodies a chip can be used in the same fashion. In the Hydrocity Zone soundtrack you can hear that the drums and the flute are using the same channel. Sounds not overlapping is a clear indicator that they are using the same channel. The result in this song is that they both play off each other in a fashion similar to how the drumbeat and guitar notes are triggered on alternate beats on Beat It. These sounds do run over each other as they don’t have the limitations of being played through a chip though they are struck out of sync with each other. A funk beat emerges from this structure, one that Michael has used in multiple other places.

I could continue through so many more elements of the Sonic 3 soundtrack to demonstrate how constraints impact a composer yet I feel like half the fun here is to find them yourselves. Check out Linus’ lecture, it’s not just interesting to know how chiptune is composed but it’s a great starting point if you’re personally interested in getting into game music composition.